Building a Post-Trade Compliance Dashboard: Rules, State, and the Reproducibility Problem

The answer should be trivial. In most systems, it is not.

This is the central tension of post-trade compliance monitoring: the rules are straightforward, but the data they operate on is a moving target. Building a dashboard that evaluates rules correctly is a solved problem. Building one that can prove it evaluated them correctly, weeks or months after the fact, is a fundamentally different challenge.

What Post-Trade Compliance Actually Requires

Post-trade compliance operates in the gap between trade execution and ongoing portfolio management. Unlike pre-trade compliance — which blocks orders before they reach the market — post-trade monitoring evaluates settled positions. The portfolio has already changed. The question is whether the resulting state violates any investment guidelines, regulatory limits, or internal policies.

The distinction matters architecturally. Pre-trade systems sit in the order flow, make binary accept/reject decisions under latency pressure, and evaluate one proposed trade at a time. Post-trade systems run against the entire portfolio, often on a schedule, and must handle nuance: a position might warrant a warning rather than a hard failure, or a violation might be acknowledged and suppressed with justification rather than immediately remediated.

The Rule Book

A basic post-trade compliance rule book typically includes six categories of checks:

Concentration rules are the most common. They express limits like “no more than 40% in equities” or “no more than 5% exposure to any single issuer.” They require grouping holdings by a classification dimension — asset class, sector, country, currency, credit rating, or issuer — and computing each group’s weight as a percentage of total net asset value (NAV).

Value rules set absolute thresholds: “government bond holdings must not fall below $1M” or “no single position may exceed $5M market value.”

Exclusion rules check holdings against prohibited lists: sanctioned countries, tobacco companies, controversial weapons manufacturers. The list is maintained independently from the rule, which introduces a versioning dependency explored later in this article.

Approved list rules are the inverse: holdings must appear on an authorised list. Common in institutional mandates where the investment policy statement restricts the investable universe.

Item count rules enforce diversification: “the portfolio must hold at least 20 positions” or “no more than 100 line items in fixed income.”

Criteria-based rules evaluate portfolio-level analytics: “average duration must remain below 5 years,” “portfolio yield must exceed 3%,” or “weighted average credit rating must be investment grade.” These rules support boolean combination — multiple conditions joined by AND or OR logic.

Severity Is Not Binary

Each rule carries a severity classification that determines its operational impact:

- Restriction — a hard breach that requires remediation or formal exception

- Alert — a breach that must be investigated and documented

- Warning — an approaching breach that triggers proactive monitoring

- Guideline — an internal best-practice threshold, informational only

The system must distinguish between these tiers because the downstream workflow — who gets notified, what actions are required, what audit trail is generated — differs substantially. A restriction triggers an issue with corrective action tracking. A guideline populates a dashboard panel that nobody is legally obligated to act on. Treating them identically wastes operational bandwidth; failing to distinguish them creates regulatory risk.

The Moving Parts: Why State Is the Enemy

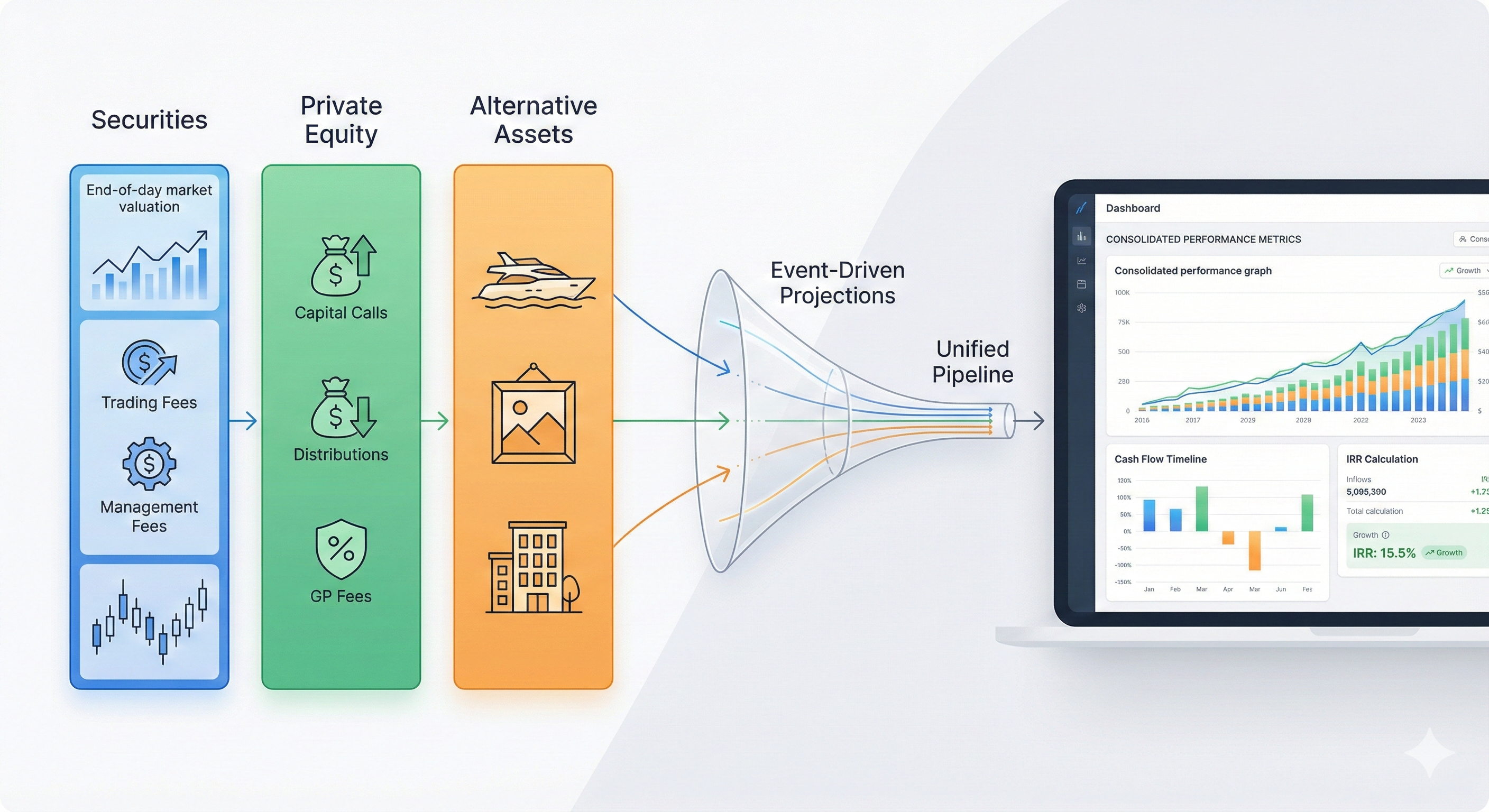

A compliance check is not a database query. It is an assembly operation. The system must gather data from multiple independent sources, freeze it at a consistent point in time, and then evaluate rules against that frozen view. Each source introduces its own volatility.

The Evaluation Context

Consider what must be assembled before a single rule can be evaluated:

- Portfolio holdings: position quantities, market values, and percentage-of-NAV for every instrument in the portfolio. These change with every trade, corporate action, or valuation update.

- Instrument classifications: the asset class, sector, country of risk, currency, and credit rating of each security. These are maintained by data vendors and can change — a downgrade from BBB to BB+ turns a compliant position into a breach overnight, without any trading activity.

- Pricing and FX rates: the valuation methodology (FIFO, average cost, specific lot), gain type, date convention (trade date vs. settlement date), and reporting currency. Different parameter choices produce different market values, which produce different concentration percentages, which produce different pass/fail outcomes.

- Rule definitions: the thresholds, dimensions, and severity of each rule. Rules evolve — a 10% issuer limit might be tightened to 8% after a risk committee review.

- List membership: the contents of any approved or restricted lists referenced by rules. A security added to the exclusion list yesterday was compliant the day before.

None of these inputs are owned by the compliance system itself. Holdings come from portfolio accounting. Classifications come from instrument master data. Prices come from market data. Rules and lists are the only inputs under direct compliance control — and even those change over time.

The sequence above reveals a critical ordering constraint: the snapshot must be captured after loading the data but before evaluating any rules. This is not merely a best practice; it is the only way to guarantee that the recorded inputs correspond exactly to the recorded outputs.

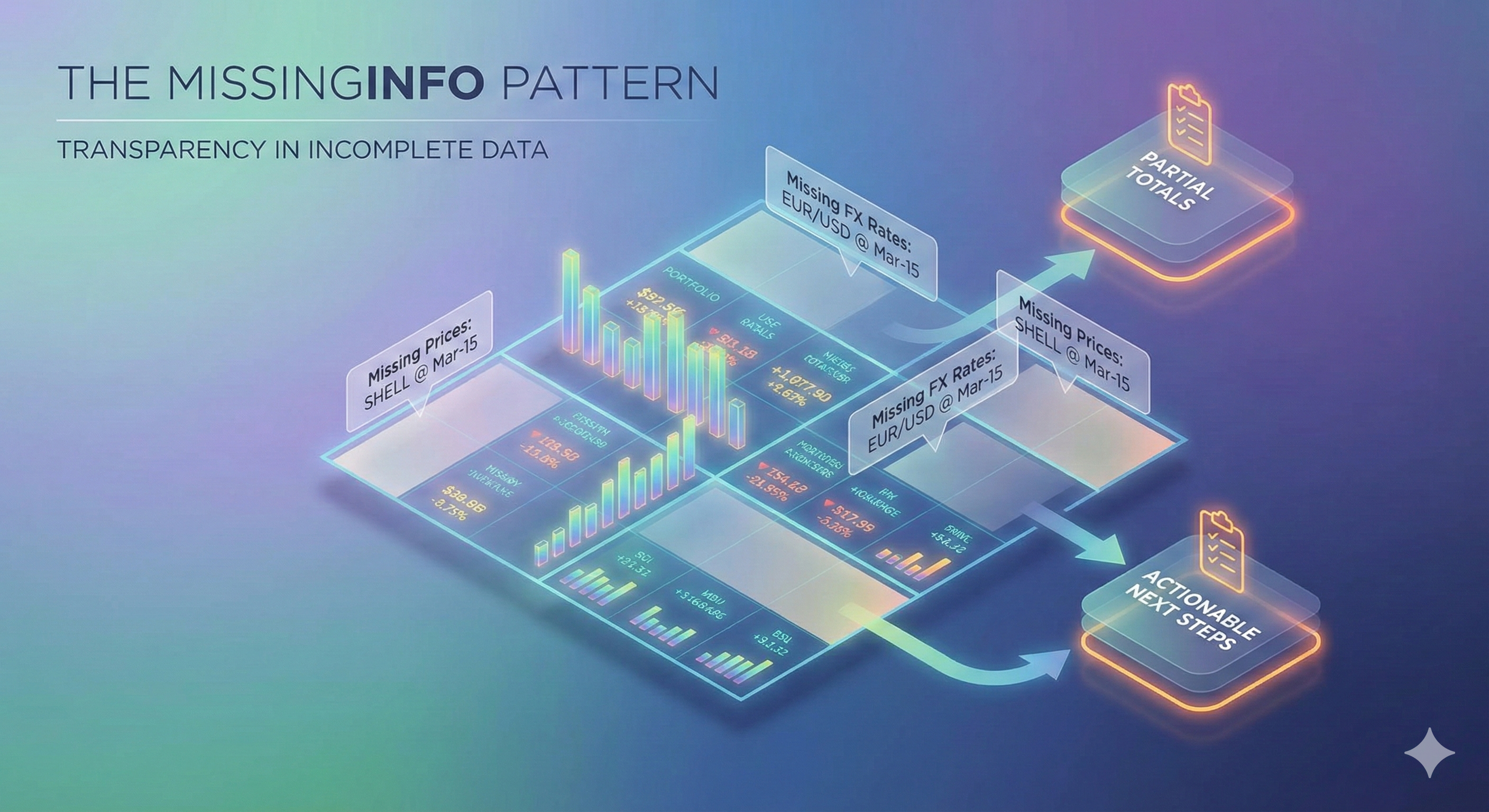

The Reproducibility Problem

Most compliance systems store results. A result might say: “ConcentrationRule-IssuerLimit evaluated against Portfolio-4027 on 2025-02-04. Status: FailAlert. Calculated value: 12.3%. Threshold violated: 10.0%.”

That is useful for a dashboard. It is insufficient for an audit.

The problem is that the inputs — the holdings, classifications, prices, rule configuration, and list contents that produced that 12.3% — are ephemeral. The portfolio has traded since Tuesday. The credit rating of that issuer may have changed. The rule threshold might have been adjusted. If any of these changed, re-running the same check today will produce a different result, and there is no way to prove what the correct answer was on Tuesday.

This is the reproducibility problem, and it divides into five categories of input that must be preserved:

| Reproducibility Input | Why It Changes | Stored by Default? |

|---|---|---|

| Holdings (positions, market values, % of NAV) | Trades, corporate actions, valuations | No — loaded live from portfolio accounting |

| Instrument classifications (asset class, sector, rating) | Vendor updates, rating agency actions | No — loaded live from instrument master |

| Pricing/FX parameters (costing method, date type) | Configuration changes | No — typically hardcoded or per-run |

| Rule configuration (thresholds, dimensions, severity) | Risk committee decisions, policy updates | History exists if event-sourced, but result does not link to a specific version |

| List membership (approved/restricted items) | Ongoing list maintenance | History exists if event-sourced, but result does not link to a specific version |

The solution is to capture a complete snapshot of all inputs at evaluation time, stored alongside the results. Each holding is frozen as an immutable record: security identifier, name, asset class, issuer, sector, country, currency, rating, quantity, market value, percentage of NAV, duration, and yield. The pricing parameters used to generate those market values are stored alongside. References to the exact versions of rules and lists in effect are recorded. And a deterministic hash is computed over the canonicalised holdings data to provide tamper-evidence.

The Storage Trade-Off

Snapshots are not free. A portfolio with 200 holdings produces roughly 40–60KB of JSON per snapshot event. A compliance run evaluating 50 portfolios generates 2–3MB of additional data. Run daily for a year, that is roughly 700MB–1GB of snapshot data alone.

Is that acceptable? In the compliance domain, the cost of being unable to reproduce a result — regulatory fines, failed audits, loss of client trust — vastly exceeds the cost of storage. But the trade-off is real, and it intensifies for firms with very large portfolios (1,000+ holdings) or high-frequency evaluation schedules. Options include compressing the snapshot payload before storage or offloading the holdings data to object storage while keeping only a storage key and integrity hash on the event stream.

The Issue Lifecycle and Architectural Choices

Detecting a violation is the beginning, not the end. A compliance breach enters a structured workflow that must be auditable from creation through resolution.

From Detection to Closure

A newly detected violation starts in the New state. It is assigned to an analyst and moves to Open. The analyst investigates — perhaps the breach is caused by a market movement rather than a trade, or perhaps the classification data is stale. If corrective action is needed, the issue moves to PendingAction, where each action item has an owner and a target date. Once remediated, the issue is Resolved, and after final review, Closed.

The Suppressed state deserves attention. It is the system’s acknowledgment that not every detected violation requires remediation. A portfolio might breach a guideline-level threshold by 0.1% due to rounding, or a known corporate action may temporarily distort concentrations. Suppression requires a justification — and that justification becomes part of the permanent audit record. Without a suppression mechanism, operations teams face a choice between ignoring violations informally (which leaves no trail) and creating unnecessary remediation work (which wastes time and obscures genuine risks).

Every state transition — assignment, note, corrective action, resolution, suppression, reopening — is recorded as an immutable event. The complete history of any issue can be reconstructed from its event stream.

Check Modes: When Does Evaluation Run?

A compliance system must support multiple evaluation triggers:

- Scheduled batch: the standard overnight or intra-day run that evaluates all portfolios against all applicable rules. Predictable resource consumption, but violations are only detected at the next scheduled interval.

- On-demand by user: a compliance officer triggers evaluation for a specific portfolio or set of portfolios. Useful after a significant trade or market event, but consumes resources unpredictably.

- Event-triggered: evaluation is triggered automatically when a relevant event occurs — a trade settles, a rating changes, a list is updated. Provides the lowest latency for violation detection, but requires careful design to avoid cascading evaluations.

Each mode has different implications for reproducibility. Scheduled runs are straightforward to snapshot because they operate on a well-defined as-of date. Event-triggered runs are harder because the “as-of” moment is the event itself, and other events may be in flight concurrently.

Implementation Considerations

Building a compliance dashboard forces a series of architectural choices — how to persist state, where to store snapshots, how to schedule evaluations, and how to integrate with upstream systems. There is no single correct answer. The right combination depends on the firm’s scale, regulatory environment, portfolio complexity, and operational maturity. What matters is that every choice is made deliberately rather than by default.

Regardless of the architecture chosen, one capability is non-negotiable: verification through replay. If a regulator or auditor questions a historical result, the system must be able to reload the exact inputs that were in effect at that moment — the holdings snapshot, the rule definitions, the list memberships — verify their integrity, re-run the evaluation, and confirm that the replayed result matches the stored result exactly. If it matches, the result is proven trustworthy. If it does not, the discrepancy is surfaced immediately for investigation.

This replay capability is the architectural litmus test. A compliance system that cannot reproduce its own results under audit conditions is, ultimately, a system that cannot be trusted.

Closing Thought

The technical challenge of post-trade compliance is not the rules. Concentration arithmetic, list membership checks, and threshold comparisons are straightforward. The challenge is the relationship between rules and time. Portfolios change. Classifications change. Rules change. Lists change. Prices change.

Every firm that builds or buys a compliance system makes these trade-offs, explicitly or by default. Understanding them is the first step toward making them deliberately.